Anthropic reveals the next billion-dollar AI agent opportunity. | Mark Cuban’s AI thesis - $495B hidden AI market.

Is AI quietly eliminating customer support jobs? The FBI pricing negotiation tactic every founder should know.

👋 Hey, Sahil here — Welcome back to Venture Curator, where we explore how top investors think, how real founders build, and the strategies shaping tomorrow’s companies.

Big idea + report of the week :

Is AI quietly eliminating customer support jobs?

Should LPs back small VC funds for upside or large funds for stability?

Frameworks & insightful posts :

Anthropic Research: Where AI agents are actually being deployed and where the real opportunity lies.

Mark Cuban’s AI Thesis: The $495B AI opportunity that most founder missing.

The FBI negotiation tactic founders should use in every pricing call.

FROM OUR PARTNER - FRAMER

🤝 Launch fast. Design beautifully. Build your startup on Framer — free for your first year.

First impressions matter. With Framer, early-stage founders can launch a beautiful, production-ready site in hours. No dev team, no hassle. Join hundreds of YC-backed startups that launched here and never looked back.

Pre-seed and seed-stage startups new to Framer can enjoy:

One year free: Save $360 with a full year of Framer Pro, free for early-stage startups.

No code, no delays: Launch a polished site in hours, not weeks, without hiring developers.

Built to grow: Scale your site from MVP to full product with CMS, analytics, and AI localisation.

Join YC-backed founders: Hundreds of top startups are already building on Framer.

Apply to claim your free year →

🤝 PARTNERSHIP WITH US

Get your product in front of over 103,000+ audience - Our newsletter is read by thousands of tech professionals, founders, investors and managers worldwide. Get in touch today.

START WITH

🧠 Big idea + report of the week

Is AI quietly eliminating customer support jobs?

For years, AI replacing jobs felt theoretical. Now we’re starting to see clean data.

Jason Lemkin recently shared hiring numbers from Pave covering 386,500 new hires, and the shift in Customer Support is dramatic. In Q4 2023, support roles accounted for 8.30% of new hires. By Q3 2025, that number fell to 2.88%.

That’s a roughly 65% decline in two years. And almost half of that drop happened in just the last three quarters.

This isn’t gradual automation. It’s an accelerating adoption curve.

Lemkin also shared a concrete operating example:

one company went from 20+ support employees to just 3 humans supported by AI agents, while revenue swung from -19% to +47% YoY during the transition. AI now handles the majority of inbound volume.

But the real story isn’t that support is disappearing. It’s being redesigned.

What’s actually happening inside companies:

AI is now Tier 1 support.

Basic ticket routing, FAQs, account updates, policy explanations, these are increasingly handled by AI at 40–60%+ deflection rates without degrading quality.

Entry-level ticket roles are shrinking.

The classic $50K generalist support rep high-volume, repetitive tasks, is fading.

Higher-skilled human roles are expanding.

What remains requires judgment: technical escalations, implementation, complex integrations, revenue-sensitive accounts. These roles often pay $100K+ and look more like hybrid Customer Success positions.

Support is merging upward into CS.

When AI handles half the tickets, the remaining human work becomes proactive:

onboarding

expansion conversations

churn prevention

relationship building

That’s not elimination. That’s elevation. The more interesting implication is strategic.

Support is simply the easiest category to automate first:

high volume

structured queries

well-documented answers

low tolerance for long response times

It’s the cleanest training ground for AI agents. And that makes it a leading indicator.

The same pattern is likely to come for:

SDR roles handling repetitive outbound

Parts of marketing ops and content workflows

QA and testing functions

Mid-level product ops work

Any function where AI can reliably handle 40-60% of structured volume without degrading output quality becomes vulnerable to redesign.

For founders, the takeaway isn’t “cut headcount.” It’s this:

If AI can handle the repetitive layer of your function, redesign the human layer above it.

Companies that simply reduce costs miss the upside. The real leverage shows up when:

Response times collapse

Coverage becomes 24/7

Humans shift to revenue-impacting work

Cost per ticket drops while CSAT improves

The 65% drop in support hiring doesn’t mean companies care less about customers. It means the best ones have figured out a new operating model.

The window to adapt is shrinking. Support was first because it was easiest. It won’t be the last.

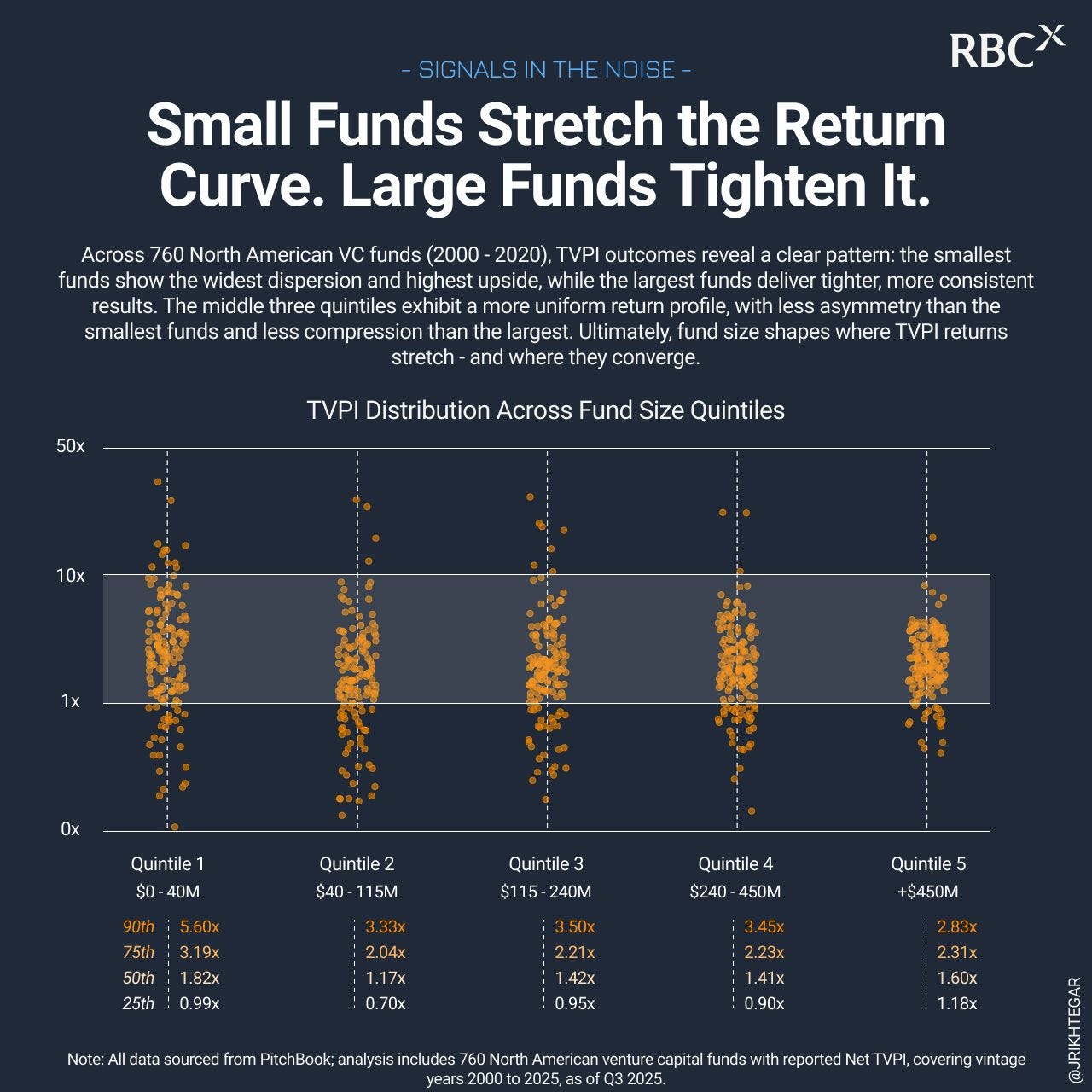

Should LPs back small VC funds for upside or large funds for stability?

For years, the default narrative has been simple: small funds outperform.

But when you look at the full distribution of outcomes, the story is more nuanced and more structural.

John Rikhtegar recently analysed 760 North American VC funds (vintages 2000–2020) using PitchBook Net TVPI data and split them into five fund-size quintiles. Instead of focusing only on averages, he plotted the entire distribution of returns.

The pattern was clear.

Small funds stretch the return curve.

Large funds compress it.

The middle is where things get uncomfortable.

Here’s what the dispersion shows.

Quintile 1 ($0–$40M funds):

90th percentile ≈ 5.6x

Median ≈ 1.82x

25th percentile ≈ 0.99x

Wide spread. Real asymmetry. Real volatility.

Quintile 5 ($450M+ funds):

90th percentile ≈ 2.83x

Median ≈ 1.60x

25th percentile ≈ 1.18x

Tighter clustering. Fewer blowouts, in both directions.

Interestingly, both the smallest and the largest funds outperformed the three middle quintiles across top, median, and bottom quartile marks. The middle 60% of funds showed less asymmetry and less consistency, the least attractive combination.

So what’s structurally happening?

Small funds benefit from ownership math.

When a $25M fund owns 10–15% of a breakout company, even a “modest” $500M–$1B exit can move the entire vehicle meaningfully. The fund doesn’t need a $20B outcome to generate a 3–5x net return. Ownership concentration turns realistic wins into fund-level impact.

That’s the asymmetry LPs are buying.

But that asymmetry comes with volatility. The dispersion is wide. Bottom quartile outcomes are materially weaker.

Large funds operate under a different design constraint.

At $1B+, a single $1B exit barely moves the needle. These funds need multi-billion-dollar outcomes or repeated solid wins to drive returns. As a result, their distribution tightens. They deliver steadier profiles and often meaningful absolute dollars returned to LPs, even if multiples are lower.

For many institutions, that matters.

One large GP relationship can return substantial capital without managing dozens of small fund exposures. Operational simplicity and capital recycling predictability are part of the appeal.

The middle segment is where it gets tricky.

Across the three middle quintiles ($40M–$450M), 3x net returns appear mostly in the top decile. That makes top-decile selection increasingly critical. If you’re not picking the best managers in that band, the return math struggles — especially when LPs can allocate to shorter-duration private credit or liquid public markets.

A few practical implications emerge.

If an LP wants asymmetry and can tolerate dispersion, small funds structurally offer it.

If an LP prioritises steadier capital return and relationship scale, large funds fit better.

If allocating to mid-sized funds, top-decile selection becomes non-negotiable.

Fund size isn’t just a branding choice. It shapes the return curve itself. The dispersion isn’t random. It’s structural, tied to ownership, check size, and how much a single win can move the vehicle.

The real question isn’t “small or large?” It’s whether the fund size aligns with the kind of return profile you’re underwriting.

FROM OUR PARTNER - SEMRUSH

📈 See what drives your competitors’ growth — before they do

Every winning strategy starts with the right data. With Semrush’s Traffic & Market Toolkit, you can uncover exactly where your competitors’ traffic comes from — search, social, or even AI referrals.

Track their top pages, monitor emerging trends, and find the untapped channels fueling their growth. Whether you’re a marketer, agency, or enterprise, you’ll get daily and weekly insights to outpace rivals and lead your market with confidence. Trusted by 10M+ marketers worldwide.

Try the Traffic & Market Toolkit free →

SOMETHING MORE

🧩 Frameworks & insightful posts

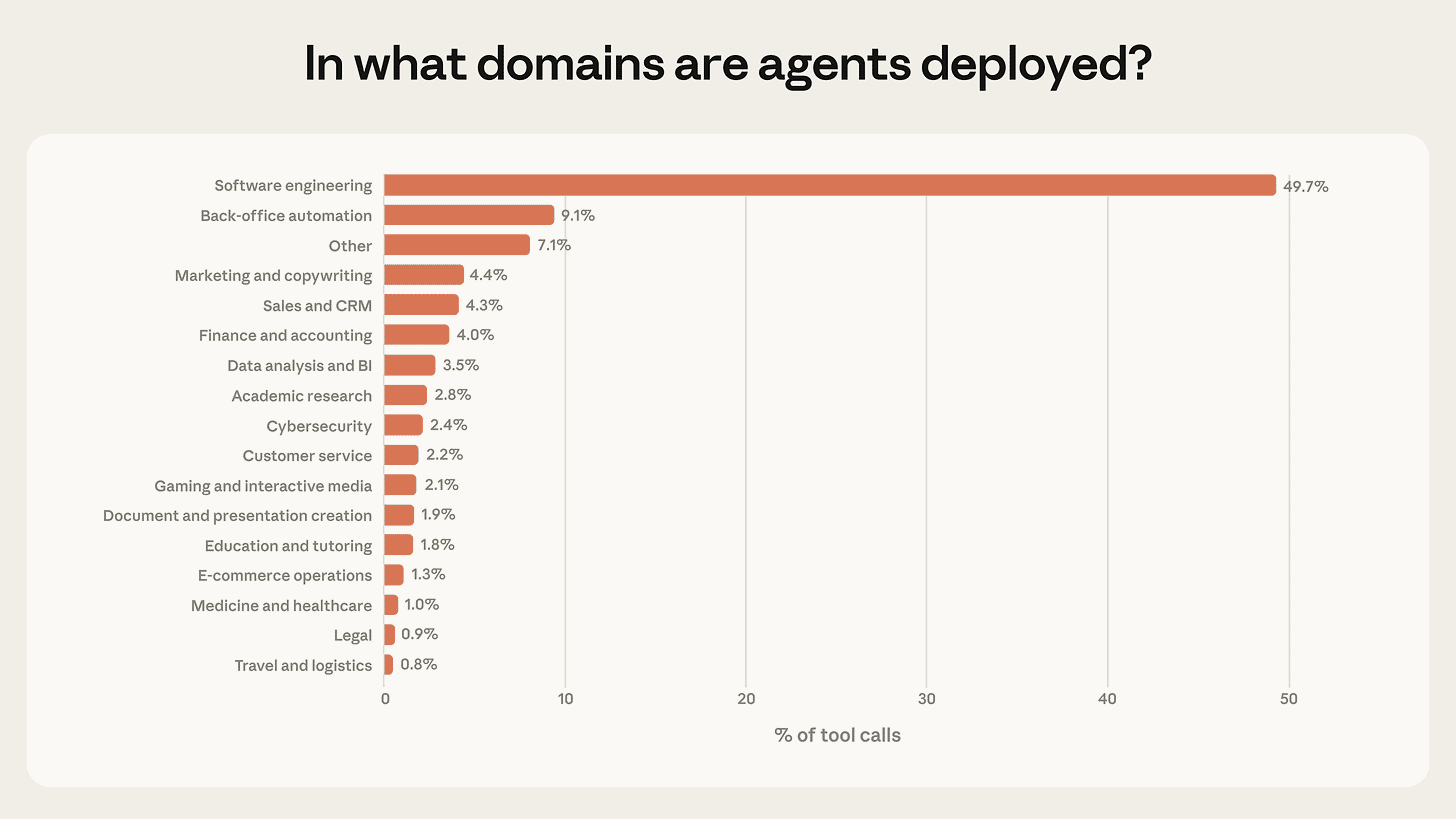

Anthropic Research: Where AI agents are actually being deployed and where the real opportunity lies.

There’s a lot of noise around AI agents. But if you strip away the hype and look at real-world deployment data, a clearer picture emerges: agents are here, they’re gaining autonomy, and they’re concentrated in very specific domains, for now.

Anthropic analysed millions of real human-agent interactions across Claude Code and its public API. Instead of speculating about the future, they studied how agents are being used in practice.

And the results are revealing.

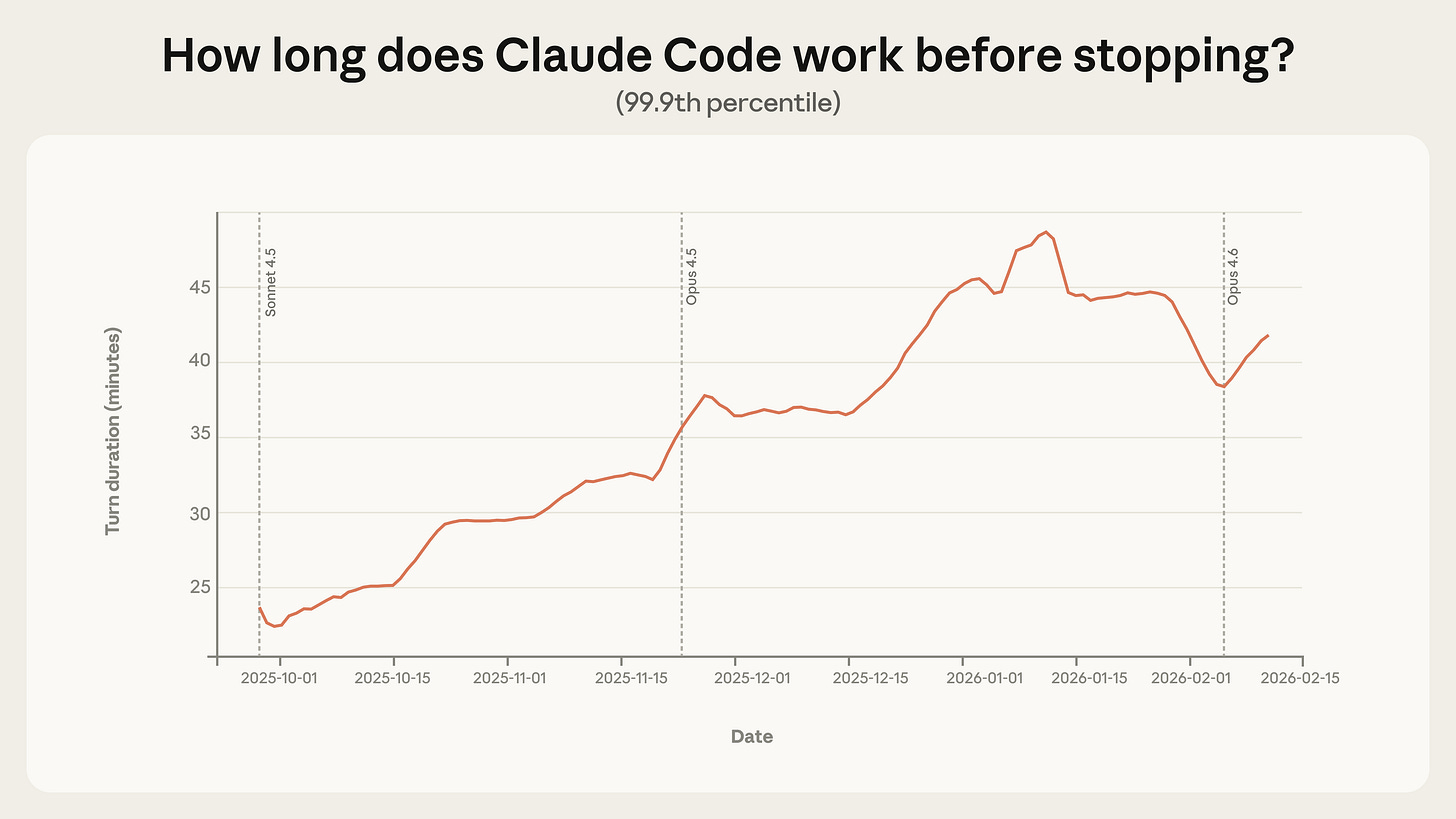

First: Autonomy Is Increasing, But Carefully

Most Claude Code sessions are still short. The median turn lasts around 45 seconds.

But the extreme tail tells a different story. In just three months, the 99.9th percentile turn duration nearly doubled, from under 25 minutes to over 45 minutes. Importantly, this growth was smooth across model releases. That suggests the shift isn’t purely about new model capability. It’s about usage patterns.

Users are granted more autonomy. As people gain experience:

New users manually approve each action.

By 750 sessions, over 40% of sessions are fully auto-approved.

Interrupt rates rise from 5% (new users) to 9% (experienced users).

This indicates something important: oversight strategy evolves. Users move from micro-approval to delegation + intervention. They let agents run, but step in when needed.

That behavioural shift is foundational for domain expansion.

Because before agents move into higher-stakes environments, humans need to learn how to supervise them efficiently.

Where AI agents are actually being deployed today

Right now, deployment is highly concentrated.

Software Engineering (~50% of agentic activity)

Software engineering dominates agent usage. Nearly half of all tool calls on Anthropic’s public API fall into this domain.

This includes:

Writing and modifying code

Deploying bug fixes

Running tests

Monitoring system health

Managing development workflows

Why engineering first?

Because:

Outputs are testable.

Mistakes are usually reversible.

Developers understand the systems.

Feedback loops are tight.

Engineering is the ideal sandbox for autonomy.

This is where agents are becoming operational infrastructure, not just assistants.

Emerging Domains (Still Small, But Growing)

Outside of engineering, we see early experimentation in:

Healthcare (retrieving medical records, workflow support)

Finance (automated transactions, reporting)

Cybersecurity (red team simulations, vulnerability scanning)

Customer support (triaging tickets, drafting responses)

Sales and business intelligence workflows

These are not yet dominant. But they are strategically important.

They represent the transition from “tool for developers” to “operational layer for businesses.”

And here’s the key: most of these deployments are still low-risk.

Anthropic found:

73% of tool calls appear to have a human in the loop.

80% involve some safeguard (permission systems, approval gates).

Only 0.8% appear irreversible (like sending a live customer email).

That means we’re early. Very early.

Agents are being tested in higher-stakes domains, such as security systems, financial transactions, and production deployments, but not yet at a massive scale.

The frontier exists. It just hasn’t fully expanded.

The Real Opportunity Isn’t in Coding, It’s in Domain Expansion

The engineering domain is saturated with early adopters. The next major wave will come from vertical expansion.

Think about what happens when agents move beyond code:

In healthcare → patient workflow automation, claims processing, and triage support.

In finance → compliance checks, reconciliation, and autonomous trading logic.

In cybersecurity → real-time threat response.

In operations → procurement, vendor management, reporting pipelines.

In customer service → full resolution systems, not just drafting replies.

Each of these domains increases both risk and autonomy. And that’s where the opportunity lies.

Right now, most businesses are experimenting at the “low-risk, reversible” layer.

The gap sits between: “I used a chatbot to draft something” and “AI autonomously runs this workflow with monitoring.”

That gap is enormous and largely unsolved.

Why Monitoring Infrastructure Becomes the Next Layer

Anthropic emphasises something subtle but critical: post-deployment monitoring will become essential as autonomy expands.

This is where most founders are underestimating the opportunity.

As agents move into higher-stakes environments, businesses will need:

Autonomy dashboards

Risk scoring systems

Intervention triggers

Audit logs

Escalation frameworks

Clarification training (teaching models when to stop)

Interestingly, Claude Code already pauses for clarification more often than humans interrupt it on complex tasks. That suggests that self-awareness and uncertainty recognition are becoming safety levers.

But scaling this across industries requires tooling, not just better models.

The next wave of AI startups may not be:

Bigger models

More tokens

Faster inference

It may be:

Agent orchestration platforms

Risk-layer infrastructure

Monitoring + intervention systems

Verticalized deployment frameworks

So right now, AI agents are heavily concentrated in software engineering because that’s where:

Errors are reversible

Oversight is natural

Technical users exist

But as trust and autonomy increase, the frontier expands.

And that expansion will likely follow this path:

Low-risk engineering automation

Internal operational workflows

Customer-facing systems with guardrails

Financial and compliance-heavy domains

High-risk autonomous decision systems

Each step increases economic value.

The largest opportunity does not sit in the current 50% of tool calls. It sits in the 50% that hasn’t yet scaled.

If you’re building in AI agents, the strategic question isn’t “How autonomous can we make this?”

It’s:

Which domain can tolerate autonomy next?

How do we build oversight infrastructure for it?

How do we make risk visible and manageable?

How do we train both humans and models to share control?

Because the data suggests we are not at peak autonomy. We are at an early controlled experimentation.

And the companies that solve domain-specific autonomy safely, visibly, and measurably, will capture the next phase of agent expansion.

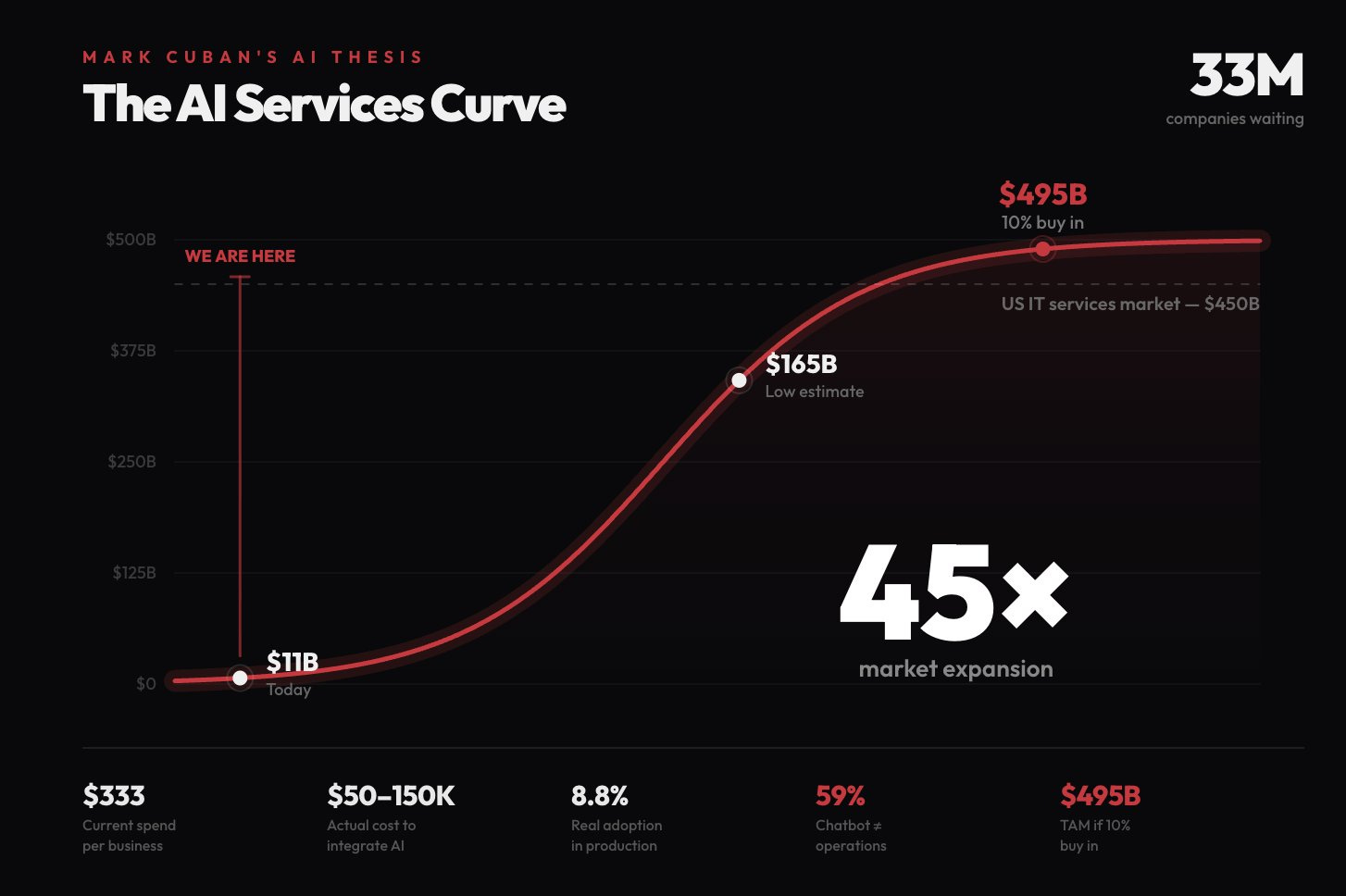

Mark Cuban’s AI Thesis: The $495B AI opportunity that most founder missing.

Most founders are chasing the wrong layer of the AI stack.

They think the opportunity is in building the next foundation model, the next ChatGPT, or the next AI-native SaaS unicorn. But when Mark Cuban calls AI the biggest job creation wave since the internet, he’s not talking about model labs.

He’s talking about services. And when you look at the numbers, the thesis becomes hard to ignore.

There are 33 million businesses in the United States that need AI integration. Yet only 8.8% actually have AI in production. The current AI services market sits around $11B, but the projected opportunity ranges between $165B and $495B. Even a modest 10% buy-in implies nearly half a trillion dollars in addressable spend.

That’s not incremental growth. That’s a structural shift.

The real gap isn’t between “no AI” and “ChatGPT access.” It’s between experimentation and operational transformation.

Most businesses today fall into the illusion of adoption. You’ll hear that 68% of companies use AI. But in practice, that often means:

Someone in marketing prompts ChatGPT for email drafts

A manager uses AI to summarise documents

Teams experiment with generic copilots

That’s surface-level usage. It doesn’t touch the core of how the business runs.

The distance between “I used a chatbot” and “AI runs parts of our operations” is massive. And that distance is where the real opportunity sits.

What businesses actually need isn’t another SaaS subscription. They need someone who can walk into their company, understand how work flows, and design AI systems that execute real tasks.

That requires a different skillset than building models from scratch. The opportunity lies in agent orchestration.

Most companies don’t struggle with access to AI. They struggle with integration. No tool, on its own, can:

Map messy workflows

Identify repetitive decision loops

Connect CRM, support, finance, and internal tools

Design escalation paths

Monitor performance and reliability

Continuously improve outputs

This is not a feature problem. It’s a systems problem.

Agent orchestration is about turning AI from a chatbot into an operational layer. Instead of “AI helping,” it becomes “AI doing.”

That might mean:

Support tickets auto-resolved with guardrails

Sales leads are pre-qualified before a human touches them

Internal reporting is generated autonomously

Contract review is automated end-to-end

Repetitive back-office tasks delegated to agents

When you frame it that way, the job creation thesis becomes clearer. Every one of those 33 million businesses needs someone who can:

Understand business incentives

Translate processes into logic

Deploy agentic workflows

Build safeguards

Iterate based on results

And most of those people will not be pure engineers.

The next high-demand profile in tech isn’t necessarily the person training foundation models. It’s the translator, someone who understands both business operations and agent systems. Former operators, product leaders, consultants, technical generalists, people who can bridge workflows and orchestration.

That’s why betting on agent orchestration makes sense. The foundational models are largely built. The APIs exist. The tools are improving weekly. What’s missing is deployment at scale.

If you’re building in AI right now, the real strategic question is not whether your product uses the best model. It’s whether you are closing the gap between curiosity and execution.

Because the $11B market today isn’t the ceiling. It’s the starting point. The expansion to $165B-$495B won’t come from better demos. It will come from businesses that move from “AI experiment” to “AI runs part of our company.”

That transition requires people who can design, implement, and operate agent systems inside real-world constraints.

And those people are about to become some of the most in-demand operators in tech. Not because they built AI. But because they made it work.

The FBI negotiation tactic founders should use in every pricing call.

Most founders negotiate the wrong way.

When a vendor raises prices, an enterprise customer pushes back, or a partner squeezes terms, we default to logic. We explain budgets. We justify usage. We ask for a “win-win.” We try to meet halfway.

And that’s usually a losing move.

A Reddit post recently broke down a tactic inspired by Chris Voss, former FBI international kidnapping negotiator and author of Never Split the Difference.

His core idea flips traditional business logic on its head: “Let’s meet halfway” and “win-win” often weaken your leverage. The real move is tactical empathy combined with no-oriented, calibrated questions.

The surprising part? AI can execute this framework better than most humans.

Here’s how founders can use it -

The core shift: stop trying to get to “yes”

In most negotiations, we push for agreement. We try to get the other side to say yes.

But Voss argues that “yes” is often meaningless. It can be a polite brush-off. A way to end the conversation. What you actually want to hear is: “That’s right.” That signals you’ve understood their position so clearly that they feel seen.

Let’s take a SaaS renewal scenario: A vendor attempted to raise pricing by 20%. Instead of writing a long, polite email asking for a discount with budget logic and apologies, the person used ChatGPT, but with strict constraints.

The key was telling AI what NOT to do:

No logic.

No budget arguments.

No apologising.

No asking directly for a discount.

Instead, the AI was instructed to use labelling, phrases like “It seems like…” or “It sounds like…” followed by what Voss calls a calibrated question. A how-or-what question that forces the other side to solve your problem.

The output was almost uncomfortable in its simplicity:

“It seems like you’re under a lot of pressure to increase revenue this quarter. It sounds like you feel that our current pricing is unfair to the value you provide. How am I supposed to agree to a 20% increase when our usage has remained flat?”

That was the entire email.

No pleasantries. No “hope this finds you well.” No emotional padding. Just psychological judo.

The result? The vendor dropped the increase entirely and locked in the old rate for two years.

Why this works (especially on sales reps)

Sales teams are trained to handle objections. They have scripts ready for:

“It’s too expensive.”

“I need to check with my boss.”

“Can you do better on price?”

But they don’t have scripts for: “How am I supposed to do that?”

That kind of calibrated question bypasses their defensive scripts. It shifts the conversation from defending price to solving a problem.

Instead of negotiating against you, they begin negotiating with themselves.

That’s the power move.

How founders can apply this in real scenarios

This isn’t just for vendor renewals. It applies across the founder's life:

When raising capital, Instead of pushing valuation logic, you might say:

“It seems like you’re concerned about the durability of our growth. What would need to be true for this to feel like a must-invest?”

When negotiating enterprise contracts:

“It sounds like you’re trying to minimize risk on your side. How are we supposed to commit engineering resources without clearer deployment timelines?”

When a partner pushes unfavourable terms:

“It seems like you’re trying to optimize for flexibility. How does that align with building a long-term strategic relationship?”

Notice the pattern:

Start with empathy (“It seems like…”)

Reflect their likely pressure or concern

End with a how/what question that forces them to engage

The AI layer founders miss

ChatGPT defaults to being helpful and professional. Which means it tends to soften language, over-explain, and write long rational justifications, exactly the kind of negotiation emails that get ignored.

The trick isn’t just asking AI to write the email.

It’s telling AI what NOT to do:

Don’t argue logically.

Don’t justify.

Don’t plead.

Don’t ask directly for the concession.

Strip the logic. Add empathy. Ask the calibrated question.

You’re not trying to overpower the other side. You’re trying to create a moment where they pause and think: “That’s fair. How do we solve this?”

That’s when you’ve shifted control.

For founders, this matters more than it seems

Founders negotiate constantly:

Pricing with vendors

Terms with investors

Discounts with customers

Equity splits with hires

Strategic partnerships

Most of these negotiations fail not because of numbers, but because of positioning.

The founders who win aren’t the most aggressive. They’re the ones who frame the conversation so the other party feels understood and then must justify their own position.

The question isn’t whether this tactic works.

The question is whether you’re disciplined enough to stop explaining and start asking: How am I supposed to do that?

You can use this prompt -

Role: Act as Chris Voss (FBI Negotiator).

Task: Rewrite the negotiation email with extreme tactical empathy.

Specific Instructions:

- The Label (Pressure): Start by hypothesizing that the rep is being pushed by management to hit quarterly revenue targets. Label that emotion.

- The Label (Value): validate that they probably think their software is worth more than I'm paying.

The Reality: Mention that my usage is flat.

The Calibrated Question: Combine the price increase and the flat usage into a "How" question that makes the increase impossible to accept without saying "no."

Style constraints:

- Remove all fluff. No greetings. No sign-offs.

- Do not be aggressive, be confused but deferential.EXPLORE MORE

💡 Reports, Articles and a few interesting stuffs

Fintech VC valuations race ahead in Europe. (Link)

The $135B agent effect: Why VCs aren’t pulling back from SaaS. (Link)

From 43 failed projects to an OpenAI acquisition in 90 days. (Link)

Check out these prompts that Anthropic, Google and OpenAI researchers use on a daily. (Link)

How Anthropic’s marketing team uses Claude code. (Link)

Who’s left in the 10x ARR club? The incredible shrinking elite of public B2B companies. (Link)

Anthropic economic index report: economic primitives. (Link)

NEWS RECAP

🗞️ This week in startups & VC

New In VC

First Cressey Ventures, a Nashville, Tenn.-based healthcare venture capital firm, closed Fund IV at $425m. (Link)

Dragonfly Capital, an Oakland, CA-based venture capital firm focused on backing blockchain and fintech startups, raised $650m for its fourth fund. (Link)

Battery Ventures, a global, technology-focused investment firm, closed a $3.25 billion fund. (Link)

Thrive Capital, a New York-based venture firm founded by Josh Kushner, has raised $10B for Thrive X, its largest fund to date. (Link)

Kindred Ventures, a San Francisco, CA-based venture capital firm, raised approx. $227m across two funds. (Link)

New Startup Deals

Fireplace, a Hong Kong-based provider of a professional trading terminal for prediction markets, raised $1.5M in Pre-Seed funding. (Link)

ARCYN Defense, a Washington, DC-based defense technology company, raised an undisclosed amount in Seed funding. (Link)

The Compression Company, a San Francisco, CA-based provider of a data compression platform, raised $3.4M in Pre-Seed funding. (Link)

Maestro AI, a Parkland, FL-based provider of a vertical artificial intelligence (AI)-powered platform built for mortgage origination, raised $1.2M in Pre-Seed. (Link)

The Bland Company, a London, UK-based developer of high-performance plant-based proteins, raised $2.67M in pre-seed funding. (Link)

Project Omega, a Washington, D.C.-based developer of advanced nuclear recycling technology, raised $12m in Seed funding. (Link)

TODAY’S JOB OPPORTUNITIES

💼 Venture capital & startup jobs

All-In-One VC Interview Preparation Guide: With a leading investor group, we have created an all-in-one VC interview preparation guide for aspiring VCs. Don’t miss this. (Access Here)

Ventures Associate - Plug and Play Tech Centre | USA - Apply Here

Visiting Analyst - Redalpine | UK - Apply Here

Operations Associate - Initialised Capital | USA - Apply Here

Associate - Primary | USA - Apply Here

Investor Relations - SC Founders Fund | India - Apply Here

VC Analyst - Alstin Capital | Germany - Apply Here

Analyst - Infrastructure & Real Assets - Stepstone Group | Australia - Apply Here

Social Media Freelancer - Initialised Capital | USA - Apply Here

Analyst / Associate - Saints Capital | USA - Apply Here

Investment Analyst Intern - NAV Capital | Dubai - Apply Here

PE & VC Partner Manager - Dealhub | UK - Apply Here

VC Platform & Events Manager - 1001 VC | USA - Apply Here

MBA Venture Capital Intern - Cerity Partners | USA - Apply Here

MBA Venture Capital Intern - Cerity Partners | USA - Apply Here

PARTNERSHIP WITH US

Get your product in front of over 102,000+ audience - Our newsletter is read by thousands of tech professionals, founders, investors and managers worldwide. Get in touch today.

🔴 Share Venture Curator

You currently have 0 referrals, only 5 away from receiving a 🎁 gift that includes 20 different investors’ contact database lists - Venture Curator

"Most companies don’t struggle with access to AI. They struggle with integration"

Hard agree. Integration is where most Late Majority and Laggards will be in the AI adoption journey

And I think Consulting sector will be in this bucket